Read Time:1 Second

Something is wrong.

Instagram token error.

mollie_ech

Follow

Load More

A daily journal from my 2016-2017 Fellowship at the Whitney Museum

As far as the direction of the project, after reading Joshua’s documentation of his work this past year, I’m thinking I might like to focus on enriching data on collection objects or creating relationships between them, unless you think it would be better to continue Joshua’s focus on the museum’s artists. Given the limited scope of the project to 1931-48, I assume this would mean objects either created or acquired during this time?

I recently read an article on projects created by SVA’s MFA in Visual Narrative program based on mapping collections at the Metropolitan Museum (http://hyperallergic.com/314638/interactive-maps-of-the-metropolitan-museum-offer-fresh-views-of-its-permanent-collections/). Students created interactive maps in part based on relationships between objects in the collection; for instance, indigo objects, or sculptures depicting the female form. I’m thinking it might be interesting to similarly map or create some kind of interactive interface connecting objects in the Whitney collection based on shared traits or themes. This might be done through information collected from field in TMS like Medium, or enrichment from some outside source.

Joshua mentioned expanding on projects by the Smithsonian American Art Museum or the Yale Center for British Art as a possible future direction for the project. The Smithsonian website discusses their use of the CIDOC Conceptual Reference Model ontology to map relationships between objects in their collection (http://americanart.si.edu/collections/search/lod/about/). In reading about CIDOC CRM, I came across a project called ResearchSpace being developed by the British Museum (http://www.researchspace.org/). Their Semantic Search component integrates data from Wikidata to allow users to search for objects based on the relationships of entities to one another. Joshua mentions using data from Wikidata and the Art and Architecture Thesaurus to enrich the data he pulled from TMS, and I wonder how this enriched data could potentially be used to create links between objects.

Joshua also mentions provenance and/or exhibition data as another possible area for further work. Another project direction I’m considering would involve linking or mapping objects to other institutions where they were previously shown. I did a lot of research on object provenance during my former job working at an art gallery, and I wonder if and how it might be possible to make this process easier and more intuitive for the museum’s researchers. It might also be interesting to enrich the museum’s data on the exhibition history of its artists using data from outside sources. Or, alternately, to add places where artists worked or exhibited to Joshua’s existing map of places of birth and death.

Using TMS search, identify all objects acquired between 1931-1948

Generate report on exhibition history of each pieces

Connect these places to entries on Wikidata. Could also refer to Artsy API.

Collect images of objects from TMS

Create script to link objects to institutional data

Could chart artistic media/artists represented in 1931-48 acquisitions in the vein of Oliver Roeder’s FiveThirtyEight article using MoMA API data.

Having started Matt Miller’s Program for Cultural Heritage class, I looked at a few of the past museum and art-focused projects done by students in the class to get some ideas. One interesting project was Carlos Acevedo’s DBO: Influence project focused on the influence property in DBpedia’s ontology as relates to contemporary artists. I wasn’t able to access Carlos’ final csv file or the visualizations he created in Gephi, but exploring a relationship property like influence seems interesting. I noticed Wikidata has “student of”, “educated at”, and “notable work” properties for some artists, for instance.

Another Program for Cultural Heritage project I looked at was Analyzing Modernism: Modern and Contemporary Painting and Sculpture at The Metropolitan Museum of Art. This project seems to have strictly used data from the Met’s collection site, but it has some interesting ideas for how to visualize collection data. Tableau and TimelineJS might be tools to explore in more depth at some point.

In order to narrow down some possible entities to focus on from the Whitney’s founding collection, I began browsing in TMS to see where objects in the collection were purchased from. Many objects in the Whitney’s founding collection were purchased directly from their creators, but I did find the names of some dealers and organizations, including:

The Cosmopolitan Club: A private all-women’s social club on the Upper East Side

(https://en.wikipedia.org/wiki/Cosmopolitan_Club_(New_York))

Ferargil Galleries: A commercial art gallery, run from 1915-1955 by Fredric Newlin Price. They dealt mostly American art (http://www.aaa.si.edu/collections/ferargil-galleries-records-8905)

The Whitney Studio Club: the antecedent to the Whitney Museum (http://cdm16694.contentdm.oclc.org/cdm/landingpage/collection/p15405coll1)

Roman Bronze Works: A bronze factory in Corona, Queens that was the country’s leading art foundry during the American Renaissance. (https://en.wikipedia.org/wiki/Roman_Bronze_Works)

Marnie Sterner Fine Arts (http://www.aaa.si.edu/collections/marie-sterner-and-marie-sterner-gallery-papers-9479)

Daniel Chester French: An artist with work in the founding collection, known for his Lincoln Memorial statue. I was interested to read in the notes field for one of his pieces in TMS that his studios were located on McDougal Alley, fairly close to the current location of the Whitney.

https://en.wikipedia.org/wiki/Daniel_Chester_French

http://www.metmuseum.org/toah/hd/fren/hd_fren.htm

C.W. Kraushaar Art Galleries: Still in existence today, was founded in 1885. Kraushaar Galleries sold many early Whitney collection items, including Charles Demuth’s “My Egypt”

(https://en.wikipedia.org/wiki/Kraushaar_Galleries)

(http://www.kraushaargalleries.com/history/)

(http://gildedage2.omeka.net/)

Valentine Gallery: 1924-1948, founded by F. Valentine Dudensing.

(http://www.aaa.si.edu/collections/valentine-gallery-records-7103)

Wildenstein Galleries: Still in operation today.

Frank K.M. Rehn Galleries: 1918-1981. Lots of correspondence between Rehn and Juliana Force, as well as with the Whitney Studio Club, is available online.

(http://www.aaa.si.edu/collections/frank-km-rehn-galleries-records-9193)

(http://www.aaa.si.edu/collections/container/viewer/Whitney-Studio-Club-Wills–203619)

N.E. Montross: (https://gildedage.omeka.net/exhibits/show/galleriesandclubs/galleries/montross)

Max Kuehne: In addition to Kuehne’s own work, the founding collection also contains work by other artists donated by Kuehne.

The Downtown Gallery: Run by Edith Halpert

(https://en.wikipedia.org/wiki/Edith_Halpert)

Macbeth Gallery: 1892-1953

(http://www.aaa.si.edu/collections/macbeth-gallery-records-9703)

After my second week of classes and listening to Professor Pattuelli lecture on Linked Open Data, I have somewhat of a better sense of how the RDF triples used with LOD work, and how to use them to express relationships. Our discussion of LIDO and CIDOC-CRM in Art Documentation has made me start thinking of how to map CIDOC ontology onto this triple structure in working with the Whitney’s data. Joshua used Schema.org vocabulary to build relationships in his dataset; I wonder whether the CIDOC-CRM ontology might offer a richer set of relationships terms, and whether it might make the Whitney’s data more interoperable with that of other cultural institutions. At the same time, the broad scope of the Schema.org ontology might make the Whitney’s data more accessible outside of the museum world.

The “student of”,”teacher of”, and “fellow student of” properties in ULAN seem like one potential avenue to explore relationships between artists in the Whitney’s founding collection. Robert Henri, for instance, was a teacher of many Ashcan School artists and an influential figure in the movement. A visualization of the Whitney’s collection data could potentially be created focused around central figures like Henri.

I also wonder whether it might be possible to collect data from a source like Grove Art Online to build relationships. I was just introduced to Grove Art Online in the Art Librarianship class at Pratt, and noticed that it is one of the reference sources used by the Art and Architecture Thesaurus. Grove Art has relationship data on what movements artists are associated with, their patrons and collectors, the materials they used, and people they collaborated with. Grove Art is subscription-based and not openly accessible, however, so I don’t know if it’s acceptable as a source of data.

As well as thinking about ontologies and relationship data, I also looked at how the British Museum has chosen to present its linked data online. In addition to its standard online collection site, the British Museum has its data published in a computer-readable format, organized using CIDOC CRM. Users can access collection data in a variety of RDM resolvable formats, in addition to being able to access an interface for searching the collection using SPARQL queries (http://collection.britishmuseum.org/). The British Museum’s Semantic Web Collection Online may be a good model for publishing the datasets Joshua created this past year, as well as any future data collected during the course of this project.

I’m curious to what extent object metadata can be pulled from TMS. I found these applications, though given that the Whitney already has an online collection, it may be redundant

http://binder.readthedocs.io/en/latest/user-manual/overview/intro.html

https://github.com/smoore4moma/tms-api

I’m interested in generating csv file(s) from TMS with provenance info for objects in the Founding Collection, similar to how Joshua created birth info and death info files for Founding Collection artists. I’ve started exploring what kind of reports TMS can generate, and how best to combine non-artist constituent data from these reports into a single database.

In TMS, I created four object packets sorted by credit line using Joshua’s object packet for the Founding Collection. I first searched TMS for objects with acquisition dates of 1948 or earlier to make sure Joshua’s Founding Collection packet is complete. I then broke down his packet by whether the object was a gift, purchase, or exchange, or if the record is missing credit.

I only found eight objects in the Founding Collection missing credit lines. These objects have provenance information recorded in the Constituent or Provenance field, but not the Credit Line field:

n.d. collection of the artist; -1931 collection of Gertrude Vanderbilt Whitney, New York, New York; 1931 Whitney Museum of American Art, New York (gift of Gertrude Vanderbilt Whitney)

| 31.229 | 1925- collection of the artist; 1931 Whitney Museum of American Art, New York, New York |

| 31.321 | 1927- collection of the artist; 1931 Whitney Museum of American Art, New York |

| 31.324 | 1930- collection of the artist; 1931 Whitney Museum of American Art, New York |

| 31.335 | 1930 collection of the artist; 1931 Whitney Museum of American Art, New York |

| 31.370 | 1930- collection of the artist; 1931 Whitney Museum of American Art, New York |

| 31.378 | |

| 31.380 | -1929 collection of the artist; 1929-1931 collection of Gertrude Vanderbilt Whitney, New York, New York; 1931 Whitney Museum of American Art, New York (gift of Gertrude Vanderbilt Whitney) |

| 31.964 | n.d. collection of the artist; 1930-1931 collection of Gertrude Vanderbilt Whitney, New York, New York (sold through Frank K. M. Rehn, Inc., New York, New York); 1931 Whitney Museum of American Art, New York (gift of Gertrude Vanderbilt Whitney) |

In looking at objects in the Founding Collection noted in the Credit Line field as being purchased, I’m noticing a significant number are noted in the Constituents field as being sourced/purchased directly from the artist. Since constituents in TMS may have multiple roles (both object-related and acquisition-related), separating galleries/dealers out from artists may be complicated. Checking acquisition-related constituents in the ‘purchase’ package against object-related constituents, possibly using a script or an application like OpenRefine, may be a way to find duplicate constituents. Joshua already created a cleaned-up artist data file, which could be used as a basis for separating out duel object and acquisition-related artists.

Somehow pulling names from TMS’ free-text fields like Provenance and Notes may be another way to find acquisition-related constituents. I know Prof. Matt Miller created an analyzer tool for the Linked Jazz project at Pratt meant to extract names from oral history transcripts (https://github.com/thisismattmiller/linked-jazz-prototype-transcript). I’m not sure how well it works or whether it’s ever been used outside the context of Linked Jazz, but it might be worth exploring. I could also manually look through the text fields for names. There are only 1,040 purchased works in the Founding Collection, so it might not be prohibitively time-consuming to do so.

I generated a ‘Text Entries’ report for the ‘purchased’ package I made in TMS to get a better overview of what kind of information is in TMS’ notes fields. I haven’t found that much new information on dealers, though I have found some interesting notes on other entities, like the name of people depicting in portraits, as well as names of friends, family members, and collaborators of various artists. I wonder whether collecting data on these people would be worthwhile, or whether it would be better to focus strictly on acquisitions. Additionally, I’ve seen some place names, such the location of scenes depicted in landscape paintings.

One issue I’ve noticed in the “Artist Biography-Online Publication” open text field is that these biographies occasionally refer to the wrong artist. I’m not sure if this is an issue with TMS, or an issue of information being generated from the wrong online sources?

I found a Wikipedia page for Frank Knox Morton Rehn, a painter and the president of the Salmagundi Club (https://en.wikipedia.org/wiki/Frank_Knox_Morton_Rehn). Frank K. M. Rehn is noted as the acquisition source for 18 works in the Founding Collection, and VIAF notes F. K. M. Rehn as an alternate name for Frank Knox Morton Rehn (https://viaf.org/viaf/21155834/), but apparently Frank K. M. Rehn the gallerist was Knox Morton’s son (http://www.aaa.si.edu/collections/frank-km-rehn-galleries-records-9193/more).

I’m wondering if the Subject Terms field in TMS might be an alternative to exploring provenance. It might be interesting to explore possible subject trends in art in the Founding Collection; there seems to be a trend toward figurative work, for instance.

I’ve started a spreadsheet to record acquisition-related constituents for purchased objects in the Founding Collection. I’m noting the constituent’s name, name authority record, objects they are the constituents of, their relation to the object (artist, dealer, etc), links to sites with more information, and possible connections to explore:

https://docs.google.com/spreadsheets/d/1j7QAm3GGaIfDTKSVvVaU9_tGgg6v0f-jMoefZJ9jSdU/edit?usp=sharing

One connection I’ve noticed among a few of the artist constituents is activity in Woodstock and membership in the Woodstock Artists Association. I went up to Woodstock earlier this year to visit the Estate of Philip Guston and to interview their archivist, Emily Jones. Emily is also the archivist for the Woodstock Artists Association, and gave me a lot of historical background on Woodstock and its importance as an artists’ community, something I was only vaguely aware of beforehand. It seems like a fair number of WAA artists are represented in the Founding Collection, so this may be one area to explore further.

DBpedia and ULAN both seem to have richer sets of relationship properties than Wikidata, so they might be more useful resources to query. Robert Henri’s DBpedia page, for instance, has “movement”, “training”, “influenced”, “influenced by”, and “seeAlso” properties. Again, however, pulling data from DBpedia may be beyond my technical capacities at the moment.

The first step for applying this process to Provenance would be to export the “imoec_founding_collection_purchase” package in TMS as a csv file. I haven’t been able to figure out how to generate csv files from TMS yet, but this would be a starting point. I’m just learning to search fields and export JSON files from csv files, so I should at least be able to start the process of cleaning a Provenance csv file.

Python doesn’t seem to be currently installed on my workspace computer, and I keep getting denied permission to install it on my user account. I’m not sure whether it would be better to contact Whitney IT or simply to use my laptop for Python programming. I’m more familiar with working with a Mac environment, so working on my laptop might be easier.

Of note: the Carnegie Museum of Art was recently involved with a data-related project focused on provenance. It lead to the creation of a Ruby library for generating provenance records:

http://www.museumprovenance.org/

https://github.com/arttracks/museum_provenance

I’m kind of curious how the tables that make up the backend of the TMS database are organized. I’m taking Database Design this semester, and am eventually going to start working with MySQL in more depth. I’m wondering whether it be worthwhile to make a MySQL database that could store provenance information in a more normalized form than TMS. I know Joshua already made a MySQL database for outputting URIs, so maybe I could augment his database with Provenance info.

I downloaded and looked at Joshua’s various object and artist csv files, as well as his “lod_test.sql” file. I’m not that familiar with SQL at this point, but I can kind of see how his MySQL database is set up with an Objects and People table. At this point the two tables aren’t connected to one another. It seems like it would make sense to have URIs created from the Objects table relate to URIs created by the People table at some point, however. Object resource URIs from the British Museum’s collection, for example, contain links to Person/Institution URIs relating to the object, such as “has former or current owner”. I’m not sure how to do this from a technical standpoint yet, however. To indicate something like provenance, it seems like it would make sense to try to enter Acquisition-related constitutions into the current People table, or to create separate Object Related and Acquisition-related Constituent tables, and to somehow connect the Object and People tables when outputting PHP files. I guess just putting Acquisition-related constituents into a tabular format would be a start. Additionally, since Acquisition-related constituents may be institutions like galleries, maybe it would make sense to create an Institutions table, or change People to Person-Institution?

I read about D2RQ (http://d2rq.org/), a tool for converting data from relational databases into an RDF format, and allowing it to be accessed through SPARQL queries. It seems like something beyond my current technical abilities to use, but I wonder whether it could eventually be used to convert a database built up from Joshua’s current one into more interconnected URIs. Additionally, if the Whitney eventually hosts Joshua’s URIs online, this would maybe assist with providing a SPARQL endpoint to query them.

Using MySQL, maybe I’ll try to create an ER diagram of how the Objects and People tables could be connected, using the British Museum collection as a model. My initial thought would be to create tables representing the predicates in RDF triples. Not sure if it would be possible to convert data from this kind of relational model into linked data, however. Linked data is meant to overcome the hurdles of storing data in relational databases, so maybe using mySQL for this kind of data is counterintuitive? Using MySQL as a triple store is apparently not unheard of, however (http://rdfextras.readthedocs.io/en/latest/store/mysqlpg.html).

Alternately, maybe NoSQL makes more sense as a database system for the project, since it is more commonly used for triple stores. The British Museum seems to use GraphDB to store their triples, which is available as a free download (http://ontotext.com/products/graphdb/). GraphDB can import data stored as .ttl, .rdf, .rj, .n3, .nt, .nq, .trig, .brf, and .owl files. Maybe it would make more sense to apply a script to the Person and Object csv files to generate triples in a format that can be fed into GraphDB?

I received some overly-aggressive sales emails from GraphDB after downloading a free version of the app which have kind of put me off from using them. Basically, when I downloaded the free version of the desktop app, someone in the sales department at GraphDB (which is apparently based in Bulgaria) used my name and registration email to look me up on LinkedIn and discovered I was working at the Whitney. This sale rep then emailed me suggesting a phone call with someone else on the GraphDB team, presumably so they could get me to convince the museum to buy a subscription. I basically told the guy I was in the very preliminary stages of my project, and that I had no budget for my project or say in the museum’s software/database system purchases, but that I would keep them in mind for the future. I assume these kinds of emails are typical with museum vendors, but having no personal experience with them, I was really taken aback.

http://erlangen-crm.org/ – CIDOC-CRM ontology mapped onto OWL; used at British Museum

Possible predicate properties for indicating provenance:

CIDOC-CRM doesn’t seem to have a lot of terms related to acquisition, so maybe it would make sense to stick to Joshua’s use of schema.org?

https://schema.org/acquiredFrom

https://schema.org/sourceOrganization

https://schema.org/DonateAction

Next week – work on joining the object and acquisition-related constituents files in Python.

Join on Object ID/Constituent ID, presumably

Also work on MySQL stuff

Do acquisition-related constituents go into the Person table, or do Object-Related and Acquisition-Related Constituents get separated?

How to make more interesting:

Try to map onto Whitney Studio Club materials on DPLA: Would be cool to connect objects to paperwork related to their purchase.

Given that MySQL is not well-suited as a triple store, I think it would make sense to migrate and append Joshua’s data in a non-relational, triple store format. The Linked Jazz Project at Pratt uses Apache Marmotta for their triples, and I’m thinking this would be good as a solution for the Whitney as well. Prof. Pattuelli has said there’s space for me to experiment on the Linked Jazz Marmotta server. The opendata.whitney.org server is another option; additionally, data from one server could always be migrated to another eventually.

Is Marmotta too complicated, though? What is its user interface like? Will discuss at meeting w/everyone on Thursday.

First need to run script that will check gift and donation csv files against artist.csv and remove dupes.

I’m going to start playing around in OpenRefine to see if I can combine some csv files, or at least get rid of duplicate entries within files:

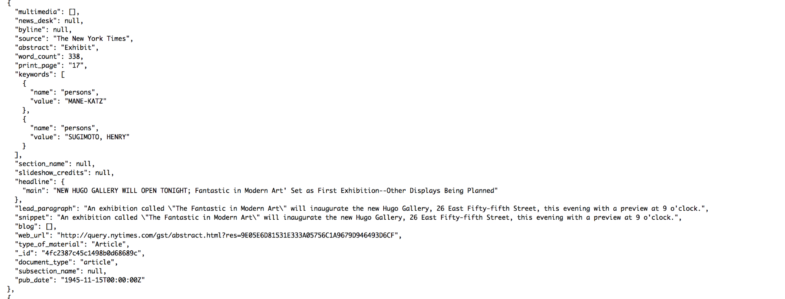

What info does the NYTimes have on Provenance-related constituents? I’m checking the API for anything interesting. Maybe more names (related entities) could be pulled from the NYT’s ‘name.value element:’

Another project idea would be to query DPLA for digital archival purchase records from the Whitney Studio Club available on DCMNY. It’s kind of cool to see how much these pieces sold for. Poppies by Ernest Fiene, for instance, went for for $100:

Not really sure if this would yield great visualizations – could maybe pull images? Also, maybe this would be redundant if TMS object records are already connected to objects in WhitneyCat (although this doesn’t seem to be the case?). I am interested in the issue of how to connect digital archival materials with collection objects they relate to. Maybe adding links to the URLs of the archival documents on DCMNY to the object/person URIs? It is interesting how much of the paperwork for these early purchases/donations is online. The descriptions of some of these archival objects also give some interesting context to the purchases.

Third project idea: map the galleries that contributed to the Founding Collection that are still around, using NY.gov data: https://data.cityofnewyork.us/Recreation/New-York-City-Art-Galleries/tgyc-r5jh

Not many of them are still around though…so maybe not.

Querying Wikidata: Not sure if it could be the basis of a separate project. Their “instance of: art gallery” property is interesting:

https://www.wikidata.org/wiki/Q7990321

Another idea – link constituents to Archives of American Art records, since they seem to have the richest info on early 20th century galleries and have a lot of digitized collections. They don’t have an API, though, so I’m not sure how easy it would be to extract their content. Additionally, the images on their website are loaded through a viewer (rather than being downloadable JPEGs:

http://www.aaa.si.edu/collections/aca-galleries-records-8772/more#section_1

Archives of American Art is sometimes the only place I can find info on these galleries. I’ve found some with ULAN and/or VIAF records, but that’s the extent of the content I can pick out.

As of MySQL Version 5.7.8, MySQL can be used to generate JSON. If these JSON files could be put into a triple store, the generation of PHP files in addition to JSON files may not be necessary:

http://dev.mysql.com/doc/refman/5.7/en/json-functions.html

I started a fork of the Whitney’s opendata Github repository for my project files. Not sure if forking or branching is a better approach for keeping my contributions to the project, but I can always change the structure later:

https://github.com/MollieEcheverria/opendata

I’m currently trying to re-model how the Whitney’s linked data is structured. Joshua’s structure uses Schema.org, but I would like to attempt to model the existing data onto CIDOC if possible, in keeping with what the British Museum has done with their linked data, as well as the Carnegie Museum’s model.

I’m starting by trying to create a data model using Draw.io. This is proving a little confusing thus far: https://drive.google.com/file/d/0B2gZKtQxkfUhX19FdXlDUEp2b2c/view?usp=sharing

Additionally, I am trying to convert a sample British Museum object record (http://collection.britishmuseum.org/id/object/EOC3130) from JSON to CSV using Python, in order to try to reverse engineer converting CSV field data to CIDOC-structured JSON. My script attempts(s) are in my Github repository.

Database-wise, since SQL can be used to output JSON, I’m planning to keep using and appending Joshua’s MySQL database for now. If and when a triple-store is implemented at the Whitney, JSON-LD/.nt files would need to be fed into it, so the MySQL database would serve as the source for generating these files. This seems to be how Linked Jazz at Pratt generated their triples as well (or at least how they stored the names from the transcripts they analyzed : https://linkedjazz.org/data-productionworkflow-draft/)

I am aiming to have a data model for the Whitney ready and approved by the first week of December.

Once the data model is established, I will query for name authority records for Provenance-related constituents.

As far as where to find authority files for obscure early 20th century dealers and collectors, as I found researching back in September, VIAF seems to have the most consistent results.

Worldcat’s experimental Linked Data also has some great relationship content for records related to some of these constituents, though the type of the relationship is not necessarily well-defined: http://www.worldcat.org/title/ferargil-galleries-records-circa-1900-1963/oclc/888072546

Getting data out of TMS’ free-text Provenance field:

Can use Python string matching to look for dates.

Each provenance note in TMS is separated by a semicolon, making it easy to use Python to sort these notes into distinct events.

The Carnegie Museum just puts their TMS Provenance info into a CIDOC “P3_has_note” field, but it seems like they could easily extract more useful info from these.

I could use either Python or MySQL to create updated JSON-LD URI files. I’m not sure which method is easier at this point, but will have a better sense after doing more work in my Programming for Cultural Heritage/Database Design classes. MySQL might make sense since SPARQL is SQL-like?

All of these JSON-LD files can then be combined into an N-Triple file (the format used for Linked Jazz’s data), and put into a triple store on the Whitney’s server (which would solve the PHP redirection issue Joshua was having).

After everything is online, if there is sufficient time left in the Spring semester, I would use the Whitney’s URIs as the basis of some kind of visualization project to demonstrate how they can be used by the public.

Specifically, it might be good to connect the Whitney’s linked data with another museum’s, the Smithsonian Renwick Gallery being the obvious example.

The Smithsonian’s artist URIs (http://edan.si.edu/saam/id/person-institution/176) could even be linked to in ours.

I created a preliminary data model for the Whitney’s data using the Smithsonian and British museum’s URIs and the Carnegie Museum’s provenance model as references. I also looked at a mapping of CIDOC to LIDO done by researchers at FORTH-ICS in 2010 (http://www.cidoc-crm.org/Resources/the-lido-model), and compared it to the mapping of the Whitney’s TMS data to LIDO detailed in the Whitney Content Standard Element Sets.

One issue I’ve noticed in trying to map data to CIDOC is that CIDOC doesn’t seem to provide individual namespace URIs for its different classes and properties. Instead, these terms are all stored in a single namespace file: (http://www.cidoc-crm.org/sites/default/files/cidoc_crm_v5.0.4_official_release.rdfs.xml)

Because of this issue, the Smithsonian’s links to CIDOC-CRM term resources come up dead (http://edan.si.edu/saam/id/object/1997.70/acquisition), while the British Museum uses an OWL-based CIDOC mapping that just downloads the entire ontology as a single RDF namespace file rather than linking to individual term URIs. This poses a problem, as creating triples that can’t be properly referenced to a URI would violate basic linked data principles.

One solution to this issue would be to map terms from terms from one or more external vocabularies onto the CIDOC structure. Prof. Pattuelli suggested Linked Open Vocabularies as a resource to research some of these: https://lov.okfn.org/dataset/lov/

It looks like there was an attempt to map CIDOC to Dublin Core back in 2000 (http://www.cidoc-crm.org/sites/default/files/dc_to_crm_mapping.pdf), but that mapping doesn’t seem to address CIDOC Event entities at all. Dublin Core does have an Event class, however (http://purl.org/dc/dcmitype/Event), so it seems like it wouldn’t be impossible to map Dublin Core terms onto CIDOC’s event-based structure.

Prof. Miller gave my Program for Cultural Heritage class read-only access to the NYPL’s archives database. Looking at the way this data is structured, particularly access terms for constituents, is helpful in thinking about tabular structure for the Whitney’s linked data.

There are three tables in the NYPL Archives schema, `collections`, `access_terms`, and `access_term_associations`

The `access_term_associations` table is used to link the individual archival collections, store in the `collections` tables, to names of constituents, which are stored in the `access_terms` table:

This structure allows the NYPL to generate some interesting interactive interfaces: http://archives.nypl.org/tools

An archival collection from this database represented in JSON:

{

“id” : 1,

“title” : “Thomas Addis Emmet collection”,

“origination” : “Emmet, Thomas Addis,\n 1828-1919”,

“org_unit_id” : 1,

“date_statement” : “1483-1876 [bulk 1700-1800]”,

“extent_statement” : “30.83 linear feet; 108 boxes, 21 volumes”,

“linear_feet” : 30.83,

“keydate” : 1483,

“identifier_value” : “927”,

“identifier_type” : “local_mss”,

“bnumber” : null,

“call_number” : “MssCol 927”,

“pdf_finding_aid” : “”,

“max_depth” : 3,

“series_count” : 28,

“active” : 1,

“created_at” : “2013-01-08 20:52:54”,

“updated_at” : “2015-11-05 03:03:37”,

“boost_queries” : “[\”emmet\”]”,

“date_processed” : null,

“component_layout_id” : 2,

“has_digital” : 1,

“featured_seq” : null,

“fully_digitized” : 1,

“show_generated_pdf” : 0,

“status_note” : null

},

These archival materials don’t have URIs associated with them directly, presumably since they are organized at collection level.

A constituent or concept related to this collection, including a link to a name authority record:

{

“id” : 3004,

“term_original” : “Legislators–United States”,

“term_authorized” : null,

“term_type” : “topic”,

“authority” : “lcsh”,

“authority_record_id” : null,

“value_uri” : “http://id.loc.gov/authorities/subjects/sh85075851”,

“control_source” : null,

“created_at” : “2013-01-08 21:01:45”,

“updated_at” : “2013-01-08 21:01:45”

},

The access terms association that shows the relationship between the collection and concepts/people related to it.

{

“id” : 7246,

“describable_id” : 1,

“describable_type” : “Collection”,

“access_term_id” : 3004,

“role” : null,

“controlaccess” : 1,

“name_subject” : 0,

“created_at” : “2013-01-08 21:01:45”,

“updated_at” : “2013-01-08 21:01:45”,

“function” : null,

“questionable” : 0

},

Because there may be many terms associated with each collection, and since any given term may apply to multiple collections, the access terms association table exists to represent this many-to-many relationship.

For the Whitney’s data, event table(s) could serve a similar role as the access terms association table for the NYPL, connecting constituents and objects as well as places and thesaurus terms.

While non-relational databases are the standard for storing linked data, at the same time, since linked data is all about relationships, it would seem reasonable that it could be used to represent linked data relationships as well. There is still the issue of hosting, however, which is presumably where the use of a triple store would be needed.

This site (https://sites.tufts.edu/liam/) gives a great overview of some methodologies of implementing linked data in archival settings, and also argues in favor of using a relational database as the basis for generating triples.

The article mentions D2RQ, a tool for hosting relational data I explored briefly and unsuccessfully tried to install earlier in the semester. I’m not sure if I would have the technical ability to install it, but if IT at the Whitney could implement it like they did Joshua’s PHP server, I could develop a MySQL database and host it on this server, solving the triple store issue and having a SPARQL endpoint available as well.

The article also mentions a project called ReLoad, which seems to involve URIs and a SPARQL endpoint generated by/stored in xDAMS. The URI links for this project seem to be dead, however, and I’m somewhat unclear about how everything within the project is structured.

I ended up creating a test MySQL database to input. I uploaded a SQL file for this database to GitHub.

https://app.graphenedb.com/dbs

http://www.linkeddatatools.com/introducing-rdf

http://blog.datagraph.org/2010/04/rdf-nosql-diff

https://virtuoso.openlinksw.com/dataspace/doc/dav/wiki/Main/VOSIndex

http://wifo5-03.informatik.uni-mannheim.de/bizer/d2r-server/publishing/

https://sourceforge.net/projects/trdf/files/?source=navbar

Hearing Alex Provo speak in our Art Documentation class on the Drawings of the Florentine Painters linked data project has given me a lot of inspiration both for the handling of the Whitney ontology and for how to normalize and store linked data triples. I am anxious to see how the official launch of this project goes in February, as this project would be an excellent model for how to handle Whitney object data. It would also seem logical to try to incorporate this model into things like online catalogue raisonnés and museum catalogues as well.

After reading more on Drawings of the Florentine Painters, I think the project offers a very concise model for how to proceed with the Whitney’s linked data in the spring.

While I was originally thinking to just use CIDOC entities and properties plus Art and Architecture Thesaurus controlled terms, I can see now that incorporating ULAN/VIAF authority files into the Whitney model would be beneficial, particularly given that CIDOC only has a namespace page for its properties rather than URIs.

After querying Wikidata for my project in Programming for Cultural Heritage, I don’t think it would be a good name authority source for the Whitney. During my early exploration of

Acquisition-related names related to the Whitney Founding, I found that even some of the most obscure early 20th century art dealers had VIAF or LCSH files, whereas I doubt Wikidata would have much information on these constituents.

Geonames would also be a good source for place names.

My work in Spring will be roughly structured around how Alex Provo et al. have described their process in their soon-to-be-published write-up on the Drawings of the Florentine Painters project.

I first need to combine all of my and Joshua’s data into three main CSV files, constituents, objects, and events. This can be done with Google Sheets.

I might also need to query TMS for some additional constituent data.

I would then use OpenRefine and possibly Python to handle any discrepancies in the data (splitting, cleaning, merging, etc).

I could either keep this data in CSV files or import it into a MySQL database. A relational database might be easier to manage and append, and might also be more useful for representing relationships, but could take time to build.

I might refine the Whitney model based on the data at this point. The Florentine Renaissance Painters project experimented with incorporating equivalent properties from other ontologies like Dublin Core along with CIDOC, although this idea was ultimately scrapped. I’m taking Metadata: Description and Access in the Spring, so I may have a better sense of whether to include other schemas and what other schemas to incorporate by February.

The Florentine Renaissance Painters project used two applications to map its tabular data onto CIDOC: Mapping Memory Manager (3M) and Karma

3M (http://139.91.183.3/3M/FirstPage):

3M (which unfortunately has kind of a buggy website), is a web-based tool for managing mapping definition files.

This document (http://83.212.168.219/DariahCrete/sites/default/files/mapping_manual_version_4g.pdf) has more details on how to use 3M.

The aforementioned PDF also contains mentions this hierarchical representation of CIDOC in Standford’s Web Protegé, which is a useful representation of CIDOC classes ranked from general to specific: http://webprotege.stanford.edu/#Edit:projectId=6fe69ce8-94b9-4624-bfe6-43af7c6d0fe3

3M is built specifically for CIDOC mappings. As my model for the Whitney is based on CIDOC, I can use the site to map the Whitney’s linked data.

3M has over 500 different mappings hosted at present, primarily CIDOC-based. These include mappings of LIDO and Dublin Core onto CIDOC, models based on internal relational database content, and even what looks like someone’s attempt to map the TMS eMuseums module:

Schemas are uploaded as XML. You can also export other people’s data mappings, see detailed comparisons of different mappings of the same source schema, and view detailed analysis of your schema:

To plug in the Whitney’s data, I would first need a source schema in XML form. This would be sourced from the TMS fields in whatever tabular data I have. I’m still a little unclear on how to do this; Alexandra apparently used a Python script to convert the column names in her tabular data to XML. 3M has an XML schema called X3ML that it provides as template for source schemas, so I could also probably just manually plug fields into this template. The documentation for 3M also explains how to use joins to convert relational database source data to a usable XML source schema.

3M also requires a URI policy generator XML file, which it uses to create URIs associated with whatever domain the source schema is hosted on (/whitney/collection/object/23928, etc).

Once these two files are uploaded to 3M, I can use the site’s interface to associate each TMS field with a CIDOC class or property. 3M can also validate your mapping and suggest other class and entity mappings.

The final mapping (or Target Record) can then be exported from 3M as RDF/XML, N-Triples, or Turtle.

Karma (http://usc-isi-i2.github.io/karma/)

Karma is a data integration tool that can be used for database data, spreadsheets, XML, and JSON. Karma automates the process of adding URIs to this data and mapping it to an ontology.

This document (http://www.isi.edu/~szekely/contents/papers/2013/eswc-2013-saam.pdf) details its use in greater detail.

I would start by preparing the Whitney’s tabular data for import into Karma, either directly from a CSV file, or from a SQL database if I choose to use one. Depending on what storage format I choose, I would either concatenate columns in MySQL or use OpenRefine.

Karma is a desktop app that runs something like MySQL Workbench. You work with and manage data locally, but this can later be hosted on an external server.

Karma can also run using data from a hosted SQL database. If I choose to store my initial data in a SQL database, I could opt to host this on the internal Whitney server Joshua used this past year and access Karma through there.

I would then use Karma to map the various columns of data onto classes from the CIDOC mapping I refined in 3M.

Karma also integrates the ability to link data to external resources.

VIAF/LCSH/ULAN: For Acquisition-related constituents. Joshua already used ULAN for Object-related constituents, but it might be worth enriching his data with VIAF/LCSH data as well.

Geonames: For places related to events/constituents

DBpedia/Wikidata: I don’t know how many of the Whitney’s non-artist constituents (in particular Acquisition-related constituents) would have records on these sites, but they might be worth investigating if time permits.

Data from Other Museums (The Smithsonian, British Museum, etc): This actually might be the most important enrichment data to include. One of the main goals of implementing linked open data at the Whitney would be to enable the sharing of resources with other art-related cultural institutions, so this integration is key.

Once all the data is prepared, it can then be hosted in some kind of graph database.

Drawings of the Florentine Painters is using Metaphacts (http://www.metaphacts.com/), which seems like an attractive solution.

This would either be hosted on the Whitney server, or possibly on the server of whatever company I use.

Time permitting, I will create some kind of visualization project with the Whitney’s data using Gephi/Tableau.

I could also try to incorporate image content from TMS/the main Whitney Collection page in some way.

Access server – Access granted – Linux

3M – tricky. Maybe not necessary

Alex – can come in to look at project

Look at what is on the server – is there stuff on there Josh added that is not mirrored elsewhere

Look at the namespaces now/early

Maybe just Python would be easier 3M/Karma

I was unfortunately unable to set up access to Joshua’s Whitney database server before the break. I have an appointment to troubleshoot the issue with Alison on Thursday, January 5th at 3.

My first attempt at gathering name authorities for the founding collection will involve querying VIAF, as they seemed to have the most consistent records for the somewhat obscure Acquisition-related constituents during my initial search.

VIAF has a pretty standard API (https://platform.worldcat.org/api-explorer/apis/VIAF), which seems like it should be straightforward to query. Since I don’t have any IDs from other authorities to start with, I would use the Authority Cluster Auto Suggest method.

An initial Authority Cluster Auto Suggest search for Briggs Buchanan, a constituent from the Gift list, yields this record (http://viaf.org/viaf/64028666/) which seems to be for the right person (died in 1976, seems to have been an art history scholar).

I created a Python script to query VIAF using the Auto Suggest method, which seems to have been a success.

Both VIAF and LC URIs are now saved in CSV files for the Gift and Purchase-related constituents.

These URIs seem for the most part to be accurate, although a few point to the wrong URI (Edward Root to his foundation instead of his personal name, Thomas Donnelly to the wrong Thomas Donnelley)

VIAF URIs sometimes contain links to the person’s corresponding URI on Wikidata and ULAN, so querying these sites may be a next step.

After my success with querying VIAF last week, I’d like to explore incorporating person/institution URIs from other institutions into the Whitney’s constituent URIs as well.

The Florentine Renaissance Drawings project plans to incorporate linked data and images from the British Museum into its URIs. Linking the Whitney’s data to external sources could have similar enrichment value.

The Smithsonian American Art Museum seems like it would be the most obvious source for enrichment. The SAAM has internal URIs for all of the artists in its collection, many of which include bios and images.

The Smithsonian Archives of American Art also seem like it could provide some enrichment value. The SAAA’s website looks to have been redesigned and relaunched within the last month or so, and the site has persistent URIs for many archival items related to the Whitney Foundation Collection.

Connecting the URIs of archival documents to constituent URIs may be somewhat of a challenge, however. CIDOC’s event structure could be applied to archival documents in a similar way to how it is applied to art objects (http://ceur-ws.org/Vol-1117/paper5.pdf). CIDOC’s P129 is about (is subject of) could be used to connect various types of E73 Information Object(s) (ie archival documents) to constituents (as per the Carnegie Provenance Model). This may be better left to a later stage in the project when object and constituent URIs have already been created, but it would be an interesting challenge. Connecting art collection items to archival documents, which was one of the primary goals of me and Victoria’s Art Documentation project this past fall, is something that continues to interest me, and it would interesting to explore how linked data could facilitate these connections.

The SAAM doesn’t have an API, but it does have a SPARQL endpoint.

Unfortunately, the SAAM’s person/institution URIs do not include a literal value for the person’s name, meaning I can’t use SPARQL to search for the names of Whitney constituents. These URIs also don’t include any links to outside name authorities like ULAN, VIAF, or Wikidata. This seems like a pretty bad linked data model, as it makes it difficult to connect constituents in the SAAM collection to non-Smithsonian resources.

Given these limitations, I’m going to try manually scraping the SAAM’s Browse Collections to see if I can extract any useful URIs.

I’m now set up with access to the Opendata Server via phpMyAdmin. Not set up with access in MySQL Workbench yet, but can upload/download via online CMS.

At the most recent Linked Jazz meeting, Karen noted that Wikidata now has Social Networks Archival Context (SNAC) IDs for some URIs: (https://www.wikidata.org/wiki/Q188969)

Not sure how many Whitney constituents would have Wikidata URIs/SNAC URIs, but this might present some interesting enrichment opportunities.

Guy Pène du Bois, for instance, has a SNAC URI: http://socialarchive.iath.virginia.edu/ark:/99166/w6pv7c36

The Whitney Studio Club: http://socialarchive.iath.virginia.edu/ark:/99166/w6cz7999

Gertrude Vanderbilt Whitney: http://socialarchive.iath.virginia.edu/ark:/99166/w6805436

SNAC is still a prototype however, and its data seems to come from VIAF, the LC, and WorldCat.

Wikidata also doesn’t seem to have many SNAC links incorporated yet. Guy Pene Du Bois has a SNAC ID, but his Wikidata page does not link to it.

This Google Sheets/OpenRefine tutorial was also mentioned in the Linked Jazz meeting: http://blog.silk.co/post/127234807482/from-ombd-to-gender-data-on-film-directors-how-to

See also:

Data Journalism Tools Part 1: Extracting and Scraping Data

Tools for Data Visualization Part 2: Cleaning Data

Tools for Data Visualization part 3: Enhancing Data

The Whitney has just activated the 2016 version of TMS (upgraded from 2012). Due to my lack of admin privileges, I cannot uninstall the old version of TMS and replace it with the new. I don’t use TMS that frequently, but this issue is probably worth fixing nevertheless.

Set up joint meeting w/Cristina and Matt

Combine my and Josh’s Constituent sheets

Draft recommendation on name authorities to include in Whitney LOD (based on querying, Smithsonian, etc).

Create some kind of visualization (with Tableau?)

At Cristina’s suggestion, I used Doodle to try to schedule a meeting with everyone.

She also suggested everyone could Skype rather than meeting in person. Given everyone’s conflicting schedules, and that Prof. Pattuelli will be at the ARLIS Conference in New Orleans at the beginning of February, this may be a good solution.

My laptop, on which I’ve been querying Python, and on which I have the OpenRefine desktop client installed, is unfortunately being repaired today, but I’m planning to do some work on tabular data today.

I’m not sure if my user privileges on the Whitney’s computers will enable me to install OpenRefine on my desktop computer here, but if nothing else I can work in Google Sheets.

Unfortunately, it is looking like my work is going to be limited to Google Sheets today.

Initially, I was a little confused how the two tables (Objects and Constituents) in Joshua’s MySQL database were related to each other, as their SQL doesn’t indicate any foreign keys. Constituent ID seems like it would be a natural foreign key in the Object table, for example.

As it turns out, Joshua used a PHP script to create a join:

<?php

$query = “SELECT objects.*, people.constituentID FROM `objects` JOIN people ON people.displayName = objects.Artist WHERE `objectID` =”.$objectID;

$result = $conn->query($query);

if (!$result) {

die($conn->error);

} else {

$row = $result->fetch_assoc();

};

// print_r($row);

$name = $row[‘Title Sort’];

$creator = $row[‘Artist’];

$artform = $row[‘artform’];

$artMedium = $row[‘artMedium’];

$artworkSurface = $row[‘artworkSurface’];

$spatial = $row[‘Dimensions’];

$dateCreated = $row[‘Date’];

$accrualMethod = $row[‘Credit Line’];

$constituentID = $row[‘constituentID’];

?>

I’m not really familiar with PHP, so I don’t understand the rationale of doing this join with PHP versus making the tables relational with primary/foreign keys in SQL.

More on generating JSON from a MySQL database using PHP:

http://www.kodingmadesimple.com/2015/01/convert-mysql-to-json-using-php.html

Indexing a Generated Column to Provide a JSON Column Index:

Summary Overview of using MySQL or PostgreSQL as a triple store:

http://rdfextras.readthedocs.io/en/latest/store/mysqlpg.html

One random note – I somehow didn’t realize that PURL stands for Persistent uniform resource locator

I’m still waiting on a response from Matt regarding a meeting with everyone to discuss the Fellowship. I will ask him about it at the next Linked Jazz meeting on Thursday.

I made some good progress on Friday in working on a master spreadsheet with all Object and Acquisition-related constituents for the Founding Collection.

One issue I noticed in looking at Joshua’s data were three suites of prints in the founding collection (31.694.1-10, 33.83.1-6, 34.37.1-6). The three suites only had one Object ID per suite, despite the fact that they are separate objects with separate creators.

Additionally, there were a smattering of artists with work in the Founding Collection who were not listed in Joshua’s Artist Data spreadsheet, and who did not have Whitney IDs created for them.

I’ve started by trying to map Joshua’s Object-related constituents unto a CIDOC event structure.

CIDOC’s official URIs lead to dead links, despite an apparent plan by the Internal Council of Museums to implement redirects .

CIDOC does have the schema available as an RDFs file. I need to do more research on RDF files as a persistent namespace, but that could be an option.

“ A source of honest confusion, however, is that RDF can be expressed as XML. Lassila’s note regarding the Resource Description Framework specification from the World Wide Web Consortium (W3C) states, “RDF encourages the view of ‘metadata being data’ by using XML (eXtensible Markup Language) as its encoding syntax.”4 So even though RDF can use XML to express resources that relate to each other via properties, identified with single reference points (URIs), RDF is itself not an XML schema. RDF has an XML language (sometimes called, confusingly, RDF, and from here forward called RDF/XML). Additionally, RDF Schema (RDFS) declares a schema or vocabulary as an extension of RDF/XML to express application-specific classes and properties.5 Simply speaking, RDF defines entities and their relationships using statements. There are various ways to make these statements, but the original way formulated by the W3C is using an XML language (RDF/XML) that can be extended by an additional XML schema (RDFS) to better define those relationships. Ideally, all parts of that relationship (the subject, predicate, object, or the resource, property, property value) are URIs pointing to an authority for that resource, that property, or that property value.”

Hardesty, J. j. (2016). Transitioning from XML to RDF: Considerations for an Effective Move Towards Linked Data and the Semantic Web. Information Technology & Libraries, 35(1), 51-64. http://search.ebscohost.com.ezproxy.pratt.edu:2048/login.aspx?direct=true&db=llf&AN=114479090&site=ehost-live

For now, I’m following suite with the British Museum and using the Erlangen mapping of CIDOC to OWL for CIDOC class namespaces.

Erlangen’s URIs, however, just initiate a download of a namespace file with the whole schema, rather than leading to URIs for individual classes and properties.

Another solution I’ve considered is using Dublin Core terms to fill in for CIDOC classes/properties, as Dublin Core does provide persistent URIs for terms in its ontology.

There’s also this OWL ontology for provenance:

https://www.w3.org/TR/prov-o/#description

http://openorg.ecs.soton.ac.uk/wiki/Linked_Data_Basics_for_Techies

Another XML mapping:

http://ws.nju.edu.cn/falcons/ontologysearch/details/recommendation.jsp?id=13022081

I am continuing to work on cleaning the Whitney’s constituent data in OpenRefine and Google Sheets.

I started looking into Google Fusion Tables, which seems like a good alternative to Tableau as a geocoding application.

Possible source for data cleaning/reconciliation info:

As per this discussion (https://groups.google.com/forum/#!topic/openrefine/GwCGTM3NGOQ), there is apparently a version of OpenRefine specifically designed for Linked Data:

https://sourceforge.net/projects/lodrefine/?source=navbar

I also borrowed an e-Edition of this book:

http://book.freeyourmetadata.org/

Notes from Hooland, S. v., & Verborgh, R. (2014). Linked Data for Libraries, Archives and Museums : How to Clean, Link and Publish Your Metadata. London: Facet Publishing:

Page 23: The authors recommend one table per entity for a linked data database, as per basic relational database guidelines. In my database, I’m thinking of Events (artwork creation, donation, purchase, etc) as entities. Indeed, in CIDOC, the E5 Event class is a sub-sub-sub-subclass of E1 Entity.

Any CIDOC class could potentially be its own table by this thinking.

My current tables correspond to the following CIDOC Entities:

Subtypes of events could potentially be their own tables:

Role E55 Type could be its own table, as the same actor may have multiple roles in relation to the same object

The Role or Event tables could basically be like associative tables (https://en.wikipedia.org/wiki/Associative_entity)

Since Joshua has artist birth and death dates and location:

Basically, with CIDOC, I should think of things in terms of Class=Table and Property=Column, to represent things with a relational structure that makes sense.

Or rather, each table is a CIDOC Class (AKA entity). Within tables, each Property is a column. These columns are populated by Classes, unless there exists a many-to-many relationship between the Class entities, in which case they get their own table.

Subject = Table

Predicate = Column

Object = URI or literal

As of the latest release of CIDOC issued this month, the E82 Actor Appellation Class has been deprecated in favor of the generic E41 Appellation

CIDOC property P48 has preferred identifier (is preferred identifier of) should define the primary key of any given table

For now, I am going to focus on making sure all my table rows conform to a CIDOC property

The CIDOC Class Hierarchy: http://cidoc-crm.org/cidoc_graphical_representation_v_5_1/class_hierarchy.html

Database Setup

I decided to join METRO, through which I’m hoping to get access to Lynda.com and take some online courses in database management.

Specifically, I’m interested in this course (https://www.lynda.com/NoSQL-tutorials/NoSQL-SQL-Professionals/368756-2.html), which is focused on NoSQL for people with SQL backgrounds, and which touches specifically on the management of museum data.

There’s also this course as well: (https://www.lynda.com/NoSQL-tutorials/Up-Running-NoSQL-Databases/111598-2.html)

METRO only has 8 licenses, however, and I’m not sure what the turnaround time for these is, so I may just sign up for Lynda independently.

UPDATE – Pratt apparently provides access to Lynda as well:

http://libguides.pratt.edu/lynda

Interoperability w/ SAAM – what resources does SAAM have that Whitney doesn’t?

SAAM URIs for artists tend to have photos – do something with photos?

Distinguish between Person and Corporate Body

I’m continuing the work I started last week, focused on normalizing my and Joshua’s combined data by splitting each CIDOC entity class into its own sheet (which will be the basis of a MySQL table eventually)

As I work on the data in Google Sheets, I am creating an ER Diagram in MySQL Workbench to keep track of what data is in what sheet/table:

Hooland, S. v., & Verborgh, R. (2014), p. 29 mentions the Open Graph protocol:

Hooland, S. v., & Verborgh, R. (2014) touch on the virtues of XML vs. JSON for encoding linked data. Joshua opted for JSON encoding in his work, and Prof. Pattuelli seems to prefer it as well.

According to Hooland and Verborgh, p. 42-43, data exchange on the internet occurs mostly in JSON, and JSON has an inherently hierarchical structure.

Regardless of whether JSON or XML is used to encode linked data, outside users have no idea what elements mean without the existence of a namespace.

Hooland and Verborgh are in favor of Turtle as an encoding syntax (p. 47)

Hooland, S. v., & Verborgh, R. (2014), p. 52

Fortunately, CIDOC seems to have finally added back pages for its individual entity classes and properties:

http://www.cidoc-crm.org/Version/version-6.2

Unfortunately, I don’t know if these pages can be considered persistent URIs, since each entity and property page is bookended by “/version-6.2”, and pages will not redirect without it:

http://www.cidoc-crm.org/Entity/e2-temporal-entity/version-6.2

http://www.cidoc-crm.org/Property/p118-overlaps-in-time-with/version-6.2

Still, it is helpful at least in the conceptual mapping of the Whitney’s data to be able to quickly view the properties that can be applied to each entity class, and to view subclasses and superclasses of entities, without having to manually scroll through a huge PDF.

I finally finished separating data for each CIDOC Entity class with a many-to-many relationship with another into its own table/Google Sheet. I went pretty granular, ending up with 14 different sheets:

Used for non-purchase acquisition events.

Used to represent the creation of an artwork.

(this could be problematic if used outside the context of the Founding Collection for newer items that don’t have a physical component)

Used to represent art objects in the Whitney’s collection.

Used to represent the unique Whitney identifier for any kind of Constituent (Object, Acquisitions, and Ex-Collections-related).

Used to record non-Whitney unique identifiers for Constituents and the name authority domains to which they belong (currently LC, VIAF, ULAN, Wikidata; potentially the Smithsonian American Art Museum in the future).

Used to record external URIs for Constituents on name authority sites.

Used to record the names and GeoNames IDs for the birth and death locations of artists. Could also be used for any other place identifiers like object locations.

Used to record dimensions of objects. Objects may have more than one listed dimension in TMS (framed vs. unframed, inches vs. cm), hence the need for a separate table.

Used to record role type (artist, donor, art dealer, etc) of an Actor in a given event. Vocabulary for these roles is from the Getty AAT.

Used to record the material components of art objects.

Used to record Constituent birth dates and locations.

Used to record Constituent death dates and locations. Also includes links to the NYTimes Obituaries Joshua found.

(deprecated in the latest version of CIDOC still in development [Version 6.2.2] in favor of the broader E41 Appellation)

Records the Display Names of Constituents along with any alternative forms.

(a new Event class being added in CIDOC Version 6.2.2)

Used to record information about the purchase of objects.

I could definitely simplify this conceptual model at a later point, but I figure that since the main audience for this data would be art historical researchers, maybe more specificity would be beneficial.

Neo4j apparently allows you to import CSV files directly and make table joins with their SQL-like query language called Cypher:

https://neo4j.com/developer/guide-importing-data-and-etl/

Given that, I may just import my CSV data directly into Neo4j after cleaning it in OpenRefine rather than bothering to make a MySQL database first. Starting with an ER diagram may be helpful, however.

Neo4j is also designed as more of a “big data” database.

CouchDB is another popular NoSQL system I see mentioned a lot.

CouchDB doesn’t support SPARQL either, however, instead relying on a JSON-based query language

The Metaphacts database used by the Florentine Renaissance Drawings project is built on top of a Blazegraph database.

Blazegraph is available as an open-source download. It also supports SPAQRL queries.

It does seem somewhat harder to set up than something like Neo4j, however.

Update: I installed Blazegraph easily, and it has a straighforward local interface. However, I’m not really sure what the applicability of it is, if any.

At first glance, OrientDB seems like it might have an easier to use interface. I will download it and give it a shot.

OrientDB took some time to install. You have to install a server and console client locally to use it:

http://orientdb.com/docs/last/

At first glance, OrientDB seems more familiar and usable than previous graph databases I’ve looked at.

You can define Classes, for example (http://orientdb.com/docs/last/)

I’m not really sure if and how OrientDB supports linked data, however, which is obviously an issue

The number of database options is kind of overwhelming, quite frankly.

This is a helpful overview:

http://db-engines.com/en/ranking/rdf+store

As per this upcoming Museums and the Web workshop (http://mw17.mwconf.org/proposal/innovative-applications-and-data-sharing-with-linked-open-data-in-museums-exploring-principles-and-examples/), Omeka apparently supports RDF exporting in some form.

I’ve never used Omeka, but will be using it in one of my courses later this semester. I’m not sure how viable it is for publishing linked data, but it’s worth exploring.

Also interesting:

http://mw17.mwconf.org/proposal/thinking-in-cidoc-crm/

CSV -> RDF Lib

Deliverable – report on how to model provenance

Gephi

Art dealers -> starting point for other connection

As Maggie more about provenance; where do they get their provenance info?

Don’t stress about databases

Opportunity to question how museum does things

Movement of objects over 10 year span

Provenance in different schemas (Dublin Core, etc)

Interview people

Method of provenance

Realistically, it’s not really within the scope of the fellowship (or my technical abilities) to try to implement a database like I’ve been hung up on.

A good goal for the project would be to present a few examples of what the Whitney’s linked data could be, and to outline the methodology of how I came up with these model(s).

As far as a visual/deliverable, a Gephi network graph is always a good option.

Additionally, if I’m seeking to narrow down what elements I want to incorporate into the Whitney’s dataset, it might be helpful to talk to someone who works with the museum’s collection files (ie Maggie) or someone in the curatorial department to get a better sense of what the museum’s needs are, and how linked data could assist researchers and museum staff in accessing the information they need.

The Getty has the sales records of Knoedler Gallery available as a CSV file on Github. They also have a ton of other provenance tools:

Overview of provenance-related datasets and search tools at the Getty:

http://www.getty.edu/research/tools/provenance/search.html

The Getty’s Github repository, where they plan to eventually make other provenance datasets available:

https://github.com/gettyopendata/provenance-index-csv

http://www.getty.edu/research/tools/provenance/faq.html#download

A Gephi-style network diagram:

http://www.getty.edu/research/tools/provenance/zoomify/index.html

http://piprod.getty.edu/starweb/collectors/servlet.starweb?path=collectors/collectors.web

All collectors in above source seem to have ULAN URIs

Getty Provenance Index Remodel Project – started late last year, they’re eventually aiming to publish everything as linked data:

http://www.getty.edu/research/tools/provenance/provenance_remodel/index.html

I’m thinking it might be interesting to compare the Getty’s Knoedler dataset with the Whitney’s. While none of the Whitney Founding Collection objects came from Knoedler, they were an influential 19th-early 20th century gallery, and sold to Gertrude Vanderbilt Whitney’s father/grandfather(?) Cornelius (http://www.artnews.com/2016/04/25/the-big-fake-behind-the-scenes-of-knoedler-gallerys-downfall/), among other influential robber barons of the time. I imagine there might be some overlap between artists or collectors in the two datasets, if nothing else.

Strangely, a cursory search of TMS reveals that Knoedler Gallery has only one related object in the database (a Jasper Johns artist book, ID 84.52)

The Getty’s provenance resources also skew heavily towards pre-20th century sources (presumably due to copyright issues) and records from European auction houses.

The Knoedler sales books do encompass the years the Whitney Founding Collection was amassed, as well as the decades before and after, so hopefully the Getty’s Knoedler dataset has some kind of linkage with the Whitney’s.

As the readme in the Getty’s Github repository notes, the Carnegie Museum also has their collection data available in both CSV and JSON format:

https://github.com/cmoa/collection

To go about connecting the Whitney’s dataset to the Getty’s and/or Carnegie’s, I would have to use a similar to what Hannah and Molly did for their Program for Cultural Heritage project:

https://github.com/MollieEcheverria/CH-LJ/blob/master/README.txt

I might need to query for URIs for the Getty/Carnegie names first.

Before I get into external datasets, I am going to start working with the Whitney’s data using OpenRefine.

I’m using this book as a reference:

Verborgh, R., De Wilde, M., & Sawant, A. (2013). Using OpenRefine : The Essential OpenRefine Guide That Takes You From Data Analysis and Error Fixing to Linking Your Dataset to the Web.Birmingham, England: Packt Publishing. Retrieved fromhttp://search.ebscohost.com.ezproxy.pratt.edu:2048/login.aspx?direct=true&db=nlebk&AN=639455&site=ehost-live&ebv=EK&ppid=Page-__-20

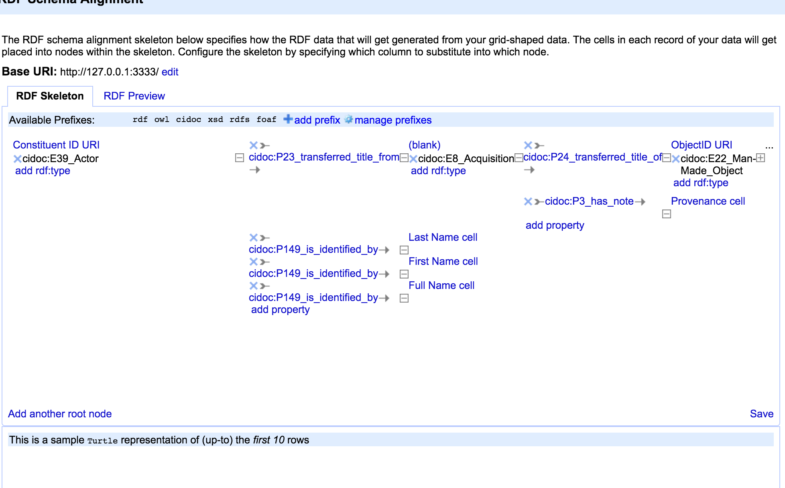

A preliminary look at the RDF Refine extension for OpenRefine (http://refine.deri.ie/) is promising. I tried plugging in the BloodyBite CIDOC mapping namespace I think I mentioned previously (http://bloody-byte.net/rdf/cidoc-crm/core_5.0.1#) to get CIDOC classes/properties:

Sidenote: this namespace lookup resource looks helpful as well:

The Erlangen OWL mapping used by the British Museum works well too:

Since I have all the columns in my Google Sheets aligned with CIDOC properties, the RDF schema alignment process should hopefully be pretty easy.

This plugin lets you identify entity nodes as well, so I could probably just simplify things and recombine all my separate sheets into one CSV file rather than having one sheet per entity:

https://en.wikipedia.org/wiki/Node_(computer_science)

https://en.wikipedia.org/wiki/Linked_data_structure

OpenRefine also does Wikidata reconciliation!

You can reconcile against SPARQL endpoints too! (https://github.com/OpenRefine/OpenRefine/wiki/Reconcilable-Data-Sources)

Adding name reconciliation sources: http://iphylo.blogspot.com/2012/02/using-google-refine-and-taxonomic.html

You can even generate RDF/XML or Turtle files from OpenRefine (no JSON-LD, sadly)

Publishing these RDF files on GitHub might be a simple preliminary way to share the Whitney’s data

Or, I could use something like this (https://github.com/semsol/arc2/wiki), though that might be again getting hung up on publication/databases.

It’s a bit tricky in practice:

In working with OpenRefine, I realize all my spreadsheets are a little out of control in terms of granularity.

Recombine spreadsheets into three main sheets (Constituent, Object, Event)

Do name reconciliation/RDF schema layout stuff in OpenRefine

Feed sheet into Gephi and make visualization.

Also spit out some RDF/XML files and make into an N-Triple/JSON-LD

http://rdf-translator.appspot.com/

Clean and reconcile Getty/Carnegie data with OpenRefine too and try to make some connections.

Make a Gephi visualization mapping the connections

To watch: a video discussing the Getty’s linked data initiative: https://www.youtube.com/watch?v=1HRbP4zjqPM

I had the opportunity to attend a curator-led staff tour of one of the Whitney’s current exhibitions before lunch. Not directly related to project work, but interesting to get some context on the work in the show and trends of the era.

Prof. Pattuelli notified me of a livestreaming talk on 02/27/17 at the Yale Center for British Art about the Carnegie Museum Provenance project.

Newbury, D. (2017 February 27). Standardizing Museum Provenance for the Twenty-First Century. New Haven, CT: Yale Center for British Art. Retrieved from https://youtu.be/YKJqINwZ–o

David Newbury, who gave the talk, is the lead project developer of the ArtTracks project at the Carnegie, which had been ongoing for the past 3.5-4 years

The Carnegie project has taken much longer than Newbury had anticipated. Coming from an animation and data visualization background, Newbury guessed the project would only a matter of weeks or months, not realizing the complexity of provenance data.

The Carnegie Museum’s linked data was not necessarily envisioned as linked open data.

The Carnegie does, however, recognize the need for sharing data across institutions.

What is the value of museums sharing their data with the public?

Are museums:

Fundamentally, however, museums are collectors.

Despite earlier hopes of researchers, linked data has not been successful in enabling web-scale AI (i.e. it hasn’t made the internet machine-readable).

Nor does it enable interoperability/easier collaboration.

Nor does it automate reconciliation/reduce workload.

Linked data is one of multiple ways of potentially representing data, each with its own pros and cons.

Aim of Carnegie project was to standardize provenance data so it could be presented as:

Need to preserve the nuances of provenance data contained within text

Advantage One: Allows for linking to other authorities

Linking to outside authorities lends the museum itself authority (i.e. certifies that museum is providing accurate information to patrons).

Name authorities allow museums to provide authoritative info without having to expend money on research

Name authorities = Museum saves money!

A museum is best suited to asserted authority – being an authority on the objects in its own collection, its own exhibitions, and other events that have taken place during the course of the museum’s existence.

Being an authority on things not within the sphere of an individual institution and its collection are better delegated to other sources. This delegated authority can be covered by various name authority sources (VIAF, ULAN, etc).

Reluctant authority – when you have to be the authority on a subject for which there are no authority records. For example, obscure constituents that only the curatorial department knows about, i.e. random art galleries from the 1930s.

As a reluctant authority, you are taking the reigns as an authority until someone else publishes more definitive information.

“Temporary authority held out of necessity, not desire”

museum_provenance (https://github.com/arttracks/museum_provenance):

“The museum_provenance library is the core technology developed for this project. It takes provenance records and converts them into structured, well-formatted data.”- http://www.museumprovenance.org/

Tool for parsing provenance relationships from text fields (such as TMS Provenance field). For my purposes, probably more useful at later stage of project.

Elysa (https://github.com/arttracks/elysa):

“The Elysa tool is a user interface designed for museum professionals. It assists with verifying, cleaning, and modifying provenance records.”

It’s a GUI for extracting provenance information from text fields

MicroAuthority (https://github.com/arttracks/microauthority): Linked open data publication tool developed for smaller institutions looking to create URIs for constituents who don’t have records on any name authority sites. Given how obscure many of the Acquisition-Related constituents in the Founding Collection are, creating URIs for these people could be a goal of my project.

provenance_interactive (https://github.com/arttracks/provenance-interactive): Tool for creating visualizations of provenance information. Probably not too exciting for the Whitney’s purposes, since everything in the Foundation Collection is American and made within the last couple centuries.

Baring_art_sales (https://github.com/arttracks/baring_art_sales): Data on the purchases of the Baring family in CSV and JSON form. Doesn’t really extend to the Whitney Founding Collection era (latest dates 1917), but interesting because it employs the Carnegie’s Acquisition Method Vocabulary, an ontology created for the project.

https://github.com/whosonfirst-data/whosonfirst-data

The Carnegie SKOS (http://www.museumprovenance.org/acquisition_methods.ttl) for their ontology seems mostly focused on very specific acquisition methods. It seems like it would make more sense as supplement to CIDOC than as a stand-alone conceptual model.

All of the Carnegie’s apps are built on Ruby. To install it, I followed these instructions: http://railsapps.github.io/installrubyonrails-mac.html

Gems in Ruby: http://guides.rubygems.org/what-is-a-gem/

Ruby is…time consuming to install

Trying to install the Getty’s Elysa app, but it keeps using an older, incompatible version

Eventually uninstalled/reinstalled Ruby, but was still unable to run Foreman server

The localhost IP address for OpenRefine, for future reference: http://127.0.0.1:3333/